Recently, clients have been experiencing problems with the indexing of their website. The developers have to dig deep into helping their clients and get their site indexed on Google. If your site is not indexed on Google, understand that the Search engine doesn’t read your content. Thus, it won’t show up to the users when they search for a specific intent.

However, if your site is not indexed, then it means that there are some parts of your website that need to be fixed. The reasons for not having the site indexed are numerous.

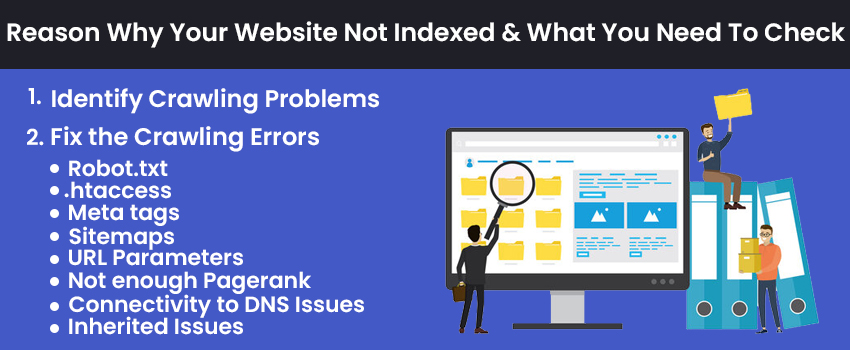

Identify Crawling Problems

Before you get into the website’s problem, not indexed, begin typing your site name (Site: yoursitename.com) into the Google Search box. Do you get your site on the search engine results page (SERPs)? And, now, does it have the appropriate number of pages as per that of your website?

Suppose you find that the number of pages appearing on the results pages is lesser in comparison to the actual number of pages on your website. In that case, your website is facing some issues that need your immediate attention. One of the trusted and reliable sources to extract the URL lists indexed by Google can be analyzed.

Start with checking the Google Console dashboard. Now, you have to leave all the other tools you have in your mind and focus on one thing. If your site is considered to have issues by Google, you shall see to them. Address these issues first before anything else. The dashboard will display all the errors on your website. All you need is to study them thoroughly and rectify them one by one.

The most common error a website can have is the 404 HTTP Status code. This means that the link to which the page is linked is missing or cannot be found. Any other status code other than 200 means something wrong and is not functional as it should be for the users or the visitors. Some great tools can check your server headers.

Fix the Crawling Errors

Here are some of the Website Not Indexing Reasons:

Robot.txt

This text file has information sitting on the website’s folder root. It creates a conversation with the guidelines to the crawlers of the search engine. Open up the file and see if you see User-Agent: *Disallow:/, then it means that none of the content of your website is indexed.

.htaccess

This invisible file resides in your public_html folder, and if it is damaged, it can create an infinite loop that does not allow the site to load.

Meta tags

Make sure that your meta tags do not have a source code with- <“Meta name= ‘Robots’ Content= “NoIndex, nofollow>.

Sitemaps

Remember to have your sitemaps updated and maintained. Check your Webmaster Tools Dashboard and address this issue and re-submit it.

URL Parameters

You can check and set the URL Parameters from the Webmaster Tools, and it guides Google not to index the links you do not want.

Not enough Pagerank

The number of Google crawlers on your website is because of your website’s lower page rank on the search engine results page (SERPs).

Connectivity to DNS Issues

It is possible that Google crawlers do not reach your server. This means that the host may be under maintenance, or maybe you shifted the site to another home. In the latter case, the DNS delegations stuff the access of the Google Crawlers.

Inherited Issues

If you had an active domain quite a long time ago, then the best content and top-class SEO will still bring the website down. You need to send a request to Google to consider your request.

Are you looking to index your website on Google? Reach out to the most trusted professional- ImpactInteractive.